Chapter 12 Interaction Models

12.1 Motivation

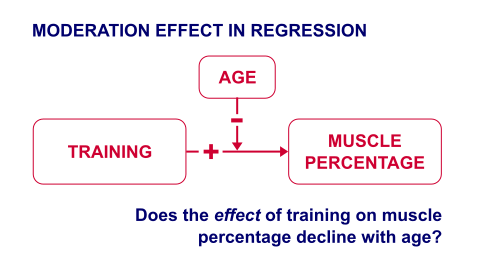

A sports doctor routinely measures the muscle percentages of his clients. He also asks them how many hours per week they typically spend on training. Our doctor suspects that clients who train more are also more muscled. Furthermore, he thinks that the effect of training on muscularity declines with age. In multiple regression analysis, this is known as a moderation or interaction effect illustrated in the figure below.

In mathematical terms an interaction is an additional multiplication term:

\[ muscle = training + age + training*age \]

12.2 Data & Sample

In the following we further explore the aforementioned income-age relationship and how the relationship itself may be influenced by gender and other characteristics.

library(haven)

master <- read_dta("https://github.com/MarcoKuehne/marcokuehne.github.io/blob/main/data/SOEP/practice_en/practice_dataset_eng.dta?raw=true")

# The data comes with Stata labels that do not work with all tidyverse commands

library(sjlabelled)

soep <- remove_all_labels(master)

# Rename German to English variable names

library(tidyverse)

soep <- soep %>%

rename("Age" = "alter",

"Income" = "einkommenj1",

"NACE2" = "branche",

"Persons in HH" = "anz_pers",

"Num_Kids" = "anz_kind",

"Education" = "bildung",

"Health" = "gesund_org",

"Satisfaction" = "lebensz_org",

"Emp" = "erwerb")

# Modify some variables

soep <- soep %>%

mutate(Single = ifelse(`Persons in HH` == 1, 1, 0),

Kids = ifelse(Num_Kids == 0, 0, 1),

Female = sex) %>%

mutate(Industry = case_when(NACE2 %in% c(1,2,3) ~ "Agriculture",

NACE2 %in% c(5:9) ~ "Mining",

NACE2 %in% c(10:32) ~ "Manufacturing",

NACE2 %in% c(35:38) ~ "Energy",

NACE2 %in% c(41:43) ~ "Construction",

NACE2 %in% c(50,51,52,55) ~ "Trade",

NACE2 %in% c(60,61,62,63,64) ~ "Transport",

NACE2 %in% c(65,66,67) ~ "Banking",

NACE2 %in% c(70,71,72,73,74,75,80,85,90,91,92,93,95,98,99) ~ "Services",

TRUE ~ "Other"))

# Round annual income to two digits

soep <- soep %>% mutate(Income = round(Income, 2))

# Build the estimation sample based on the topic

soep <- soep %>%

filter(Emp == 1) %>%

filter(Age <= 65) %>%

filter(Income > 1) %>%

filter(syear == 2019)

# Conduct a complete case analysis

soep <- soep %>% filter(complete.cases(.))The datasummary() function from modelsummary package provides a good overview for clean, selected variables.

library(modelsummary)

soep %>%

dplyr::select(Income, Age, Kids, Female, Satisfaction) %>%

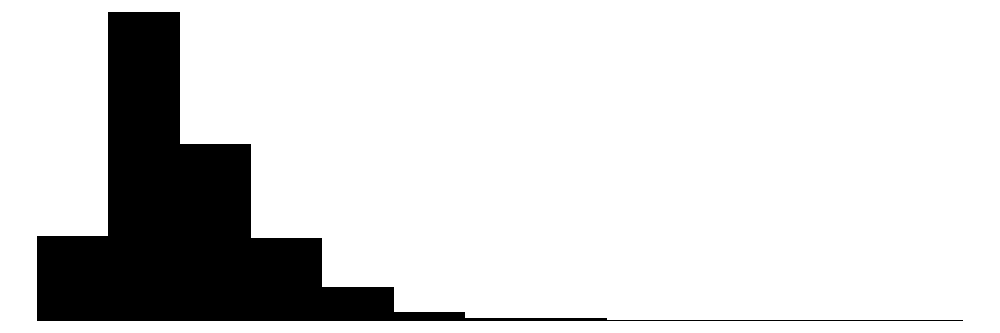

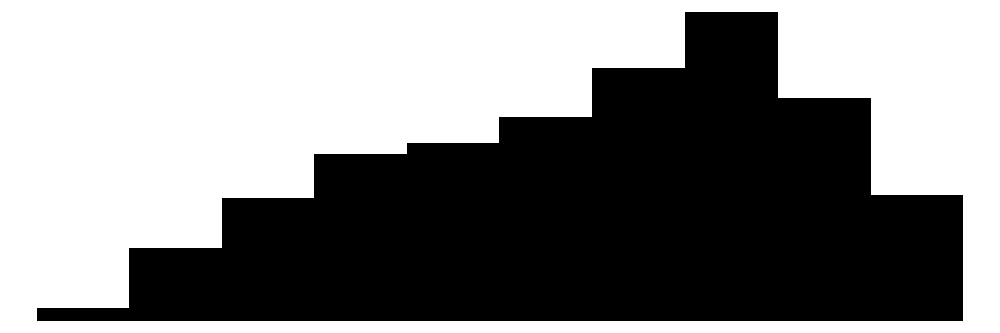

datasummary_skim()| Unique | Missing Pct. | Mean | SD | Min | Median | Max | Histogram | |

|---|---|---|---|---|---|---|---|---|

| Income | 1211 | 0 | 42145.2 | 23488.8 | 915.5 | 37337.3 | 257886.2 |  |

| Age | 47 | 0 | 45.4 | 11.2 | 18.0 | 47.0 | 65.0 |  |

| Kids | 2 | 0 | 0.4 | 0.5 | 0.0 | 0.0 | 1.0 |  |

| Female | 2 | 0 | 0.4 | 0.5 | 0.0 | 0.0 | 1.0 |  |

| Satisfaction | 11 | 0 | 7.6 | 1.4 | 0.0 | 8.0 | 10.0 |  |

The average annual income in this sample is 42145. Notice that the income is left skewed whereas life satisfaction is skewed to the right. As expected, most people earn a low or average income and there are several extreme high incomes at the right. On the other hand, most people are on average pretty satisfied with their lifes in general. The mean of a dummy variables is the share of people with the characteristic coded with 1, i.e. about 40 % of the people have kids. In the following, binary dummy variables are coded as factors.

12.3 Throwback Parallel Slopes

Simple regression is very similar to the correlation between two variables. A multiple regression with one continuous and one dummy variable is called a parallel slopes model. The data looks like this:

Conclusion from Parallel Slopes: The fundamental truth is that the effect of age on income is identical across gender (i.e. for males and females). Males and female are equipped (by nature, for some reason) with a different starting annual income on average. -- Convinced?

12.4 Regression with Moderators

In the following we consider all possible kinds of two-way interactions (between two variables). Higher-order interaction might be useful under special conditions, e.g. in a study where the effect of a new drug depends on the patient's age and gender.

An interaction can be coded implicitly or explicitly in R.

# implicit coding, that automatically extends

lm1 <- lm(mpg ~ vs*am, data=mtcars)

# explicit coding, each single term and their interaction

lm2 <- lm(mpg ~ vs + am + vs:am, data=mtcars)

# only use the interaction term?

lm3 <- lm(mpg ~ vs:am, data=mtcars)

modelsummary(title = 'Coding Interaction in R.',

list("Version 1" = lm1,

"Version 2" = lm2,

"Version 3" = lm3),

gof_omit = 'R2|AIC|BIC|RMSE|Log.Lik.|F')| Version 1 | Version 2 | Version 3 | |

|---|---|---|---|

| (Intercept) | 15.050 | 15.050 | 17.772 |

| (1.002) | (1.002) | (0.826) | |

| vs | 5.693 | 5.693 | |

| (1.651) | (1.651) | ||

| am | 4.700 | 4.700 | |

| (1.736) | (1.736) | ||

| vs × am | 2.929 | 2.929 | 10.599 |

| (2.541) | (2.541) | (1.766) | |

| Num.Obs. | 32 | 32 | 32 |

The single terms vs and am are sometimes called the main effects.

12.4.1 Dummy * Dummy

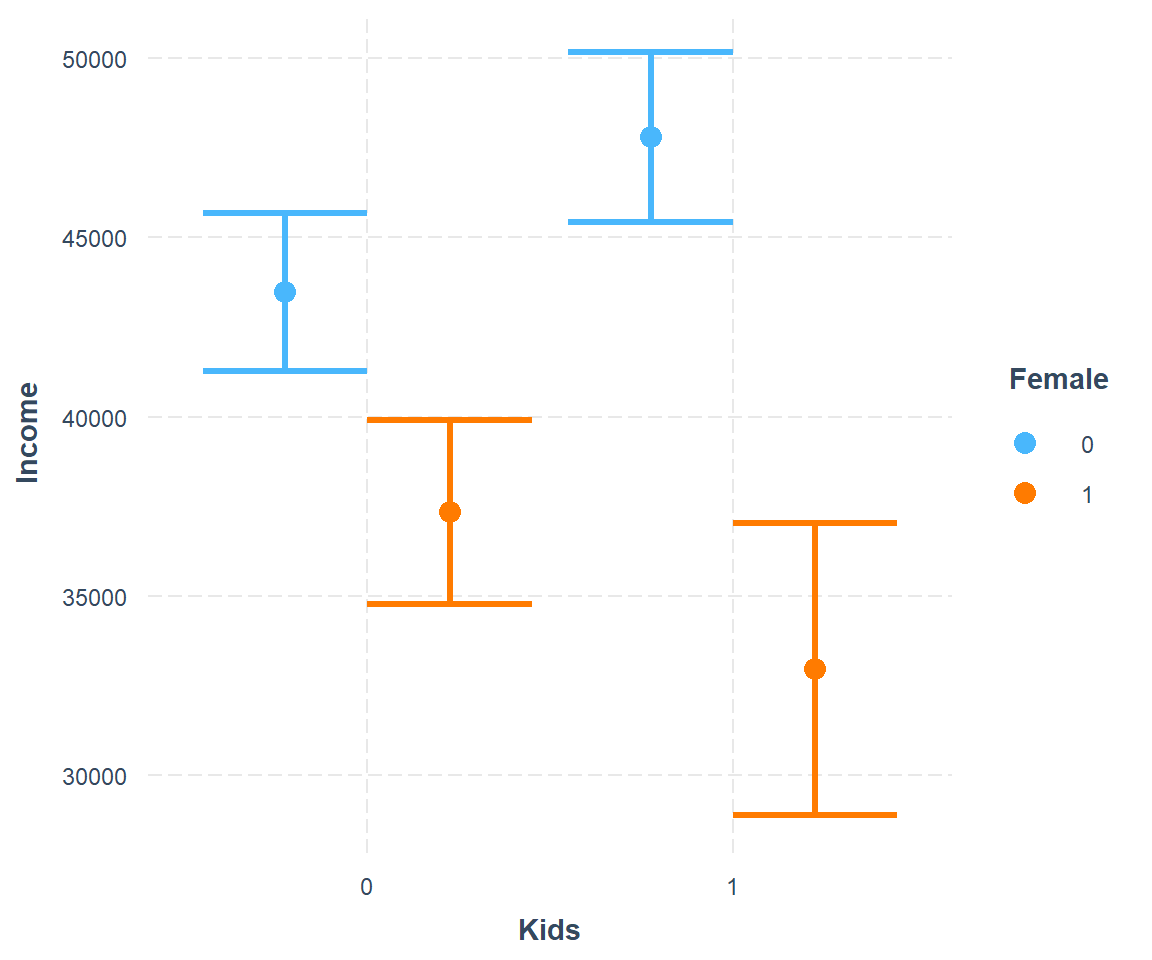

We estimate how income depends on having kids and being female.

interact1 <- lm(Income ~ Kids*Female, data=soep)

modelsummary(title = 'Interaction Model 1.',

list("Income" = interact1),

gof_omit = 'R2|AIC|BIC|RMSE|Log.Lik.|F')| Income | |

|---|---|

| (Intercept) | 43476.056 |

| (1128.318) | |

| Kids1 | 4329.056 |

| (1650.629) | |

| Female1 | -6131.395 |

| (1727.116) | |

| Kids1 × Female1 | -8708.424 |

| (2954.627) | |

| Num.Obs. | 1211 |

We plot the interaction with via cat_plot() from the interactions package. This interaction dedicated package makes visualization easy.

This graph suggests that men earn more than women. Men with kids earn more than men without kids. The kids-effect is reversed for women, which report higher incomes when they do not have kids.

12.4.2 Categorical * Dummy

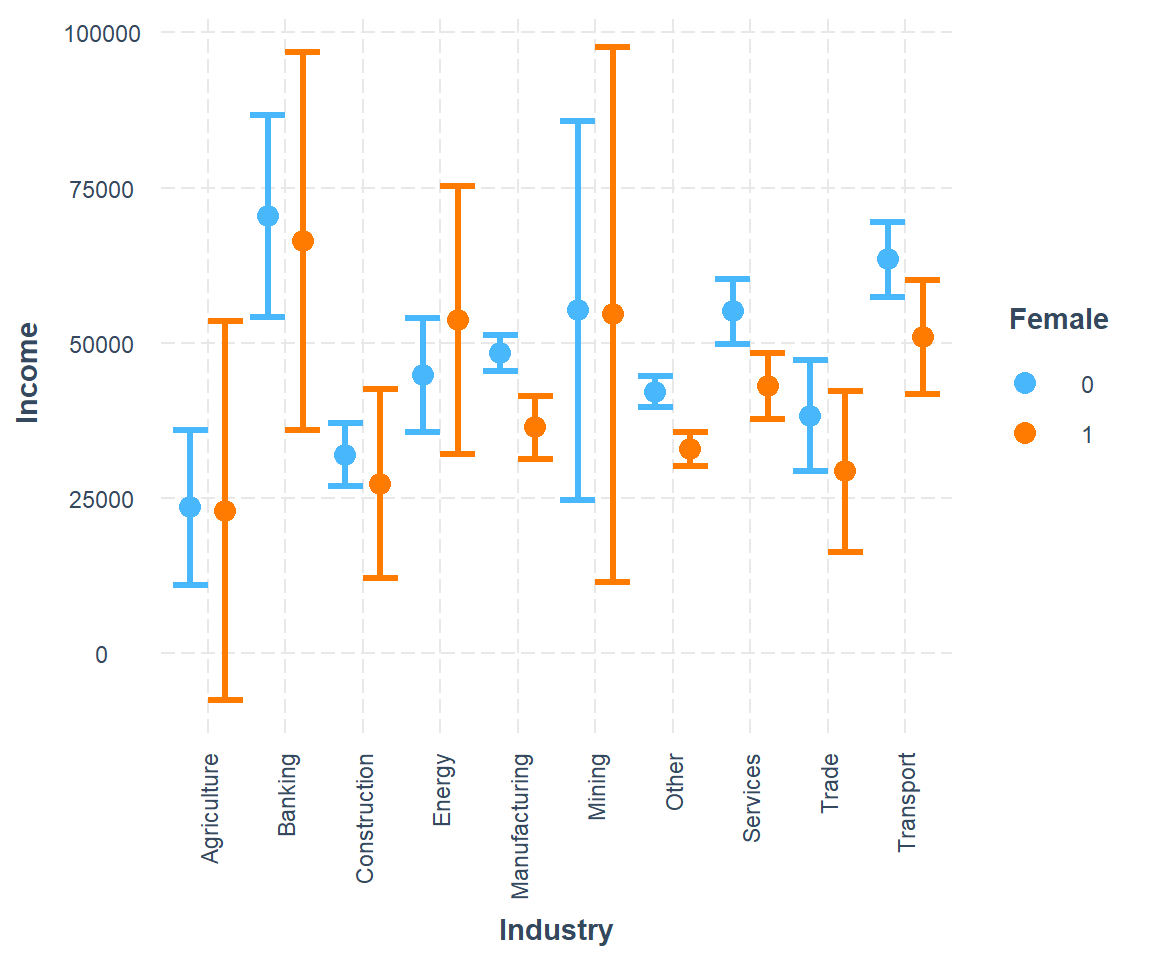

We estimate how income depends on working in different industries and being female.

interact2 <- lm(Income ~ Industry*Female, data=soep)

library(broom)

library(knitr)

# tidy(interact2) %>%

# select(term, estimate, std.error) %>%

# #filter(! is.na(estimate)) %>%

# kable(digits=3)

# modelsummary(title = 'Interaction Model 2.',

# list("Income" = interact2),

# gof_omit = 'R2|AIC|BIC|RMSE|Log.Lik.|F')

parts = 2 ## multi-column parts to display alongside

tidy(interact2) |>

dplyr::select(term, coefficient = estimate) |>

mutate(part = rep(1:parts, each = ceiling(n()/parts), length.out = n()),

row_index = rep(1:ceiling(n()/parts), length.out = n())

) |>

split(~ part) |>

Reduce(f = \(x, y) x |> select(-part) |> left_join(y, by = 'row_index')) |>

dplyr::select(-c(row_index, part)) |>

kable()| term.x | coefficient.x | term.y | coefficient.y |

|---|---|---|---|

| (Intercept) | 23513.113 | Female1 | -549.8783 |

| IndustryBanking | 46946.525 | IndustryBanking:Female1 | -3508.2902 |

| IndustryConstruction | 8443.728 | IndustryConstruction:Female1 | -4093.7365 |

| IndustryEnergy | 21340.939 | IndustryEnergy:Female1 | 9357.9811 |

| IndustryManufacturing | 24884.761 | IndustryManufacturing:Female1 | -11453.9382 |

| IndustryMining | 31740.912 | IndustryMining:Female1 | -130.2967 |

| IndustryOther | 18604.883 | IndustryOther:Female1 | -8681.7468 |

| IndustryServices | 31579.784 | IndustryServices:Female1 | -11550.5649 |

| IndustryTrade | 14766.445 | IndustryTrade:Female1 | -8405.7213 |

| IndustryTransport | 39986.219 | IndustryTransport:Female1 | -12060.6618 |

# The function can be modified by ggplot commands.

cat_plot(interact2, pred = Industry, modx = Female) +

theme(axis.text.x = element_text(angle = 90, vjust = 0.5, hjust=1))

12.4.3 Continuous * Dummy

Note that R automatically adds main effects if you use multiplication operator:

Thus \[ height * female \]

translates to

\[ height + female + height*female \]

interact3 <- lm(Income ~ Age*Female, data=soep)

modelsummary(title = 'Interaction Model 2.',

list("Income" = interact3),

gof_omit = 'R2|AIC|BIC|RMSE|Log.Lik.|F')| Income | |

|---|---|

| (Intercept) | 17367.198 |

| (3438.645) | |

| Age | 612.892 |

| (72.860) | |

| Female1 | 4649.196 |

| (5506.496) | |

| Age × Female1 | -296.045 |

| (118.698) | |

| Num.Obs. | 1211 |

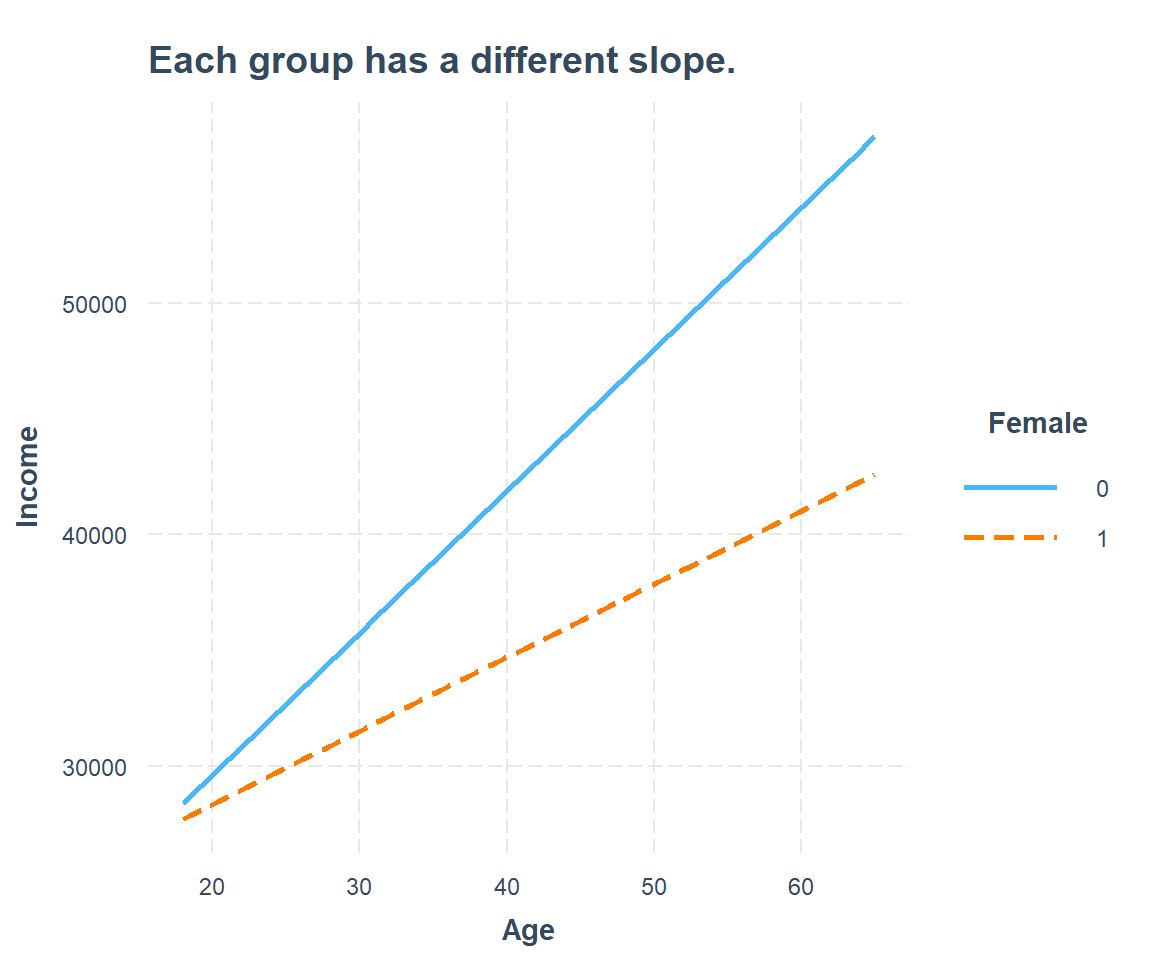

The visualization for non-parallel slopes looks like this:

interact_plot(interact3, pred = Age, modx = Female,

main.title = "Each group has a different slope.")

Conclusion

The fundamental truth is that the effect of age on income is positive but not identical between males and females. Men and women at the exact same age earn different annual incomes and the gap widens over time. There is effect heterogeneity.

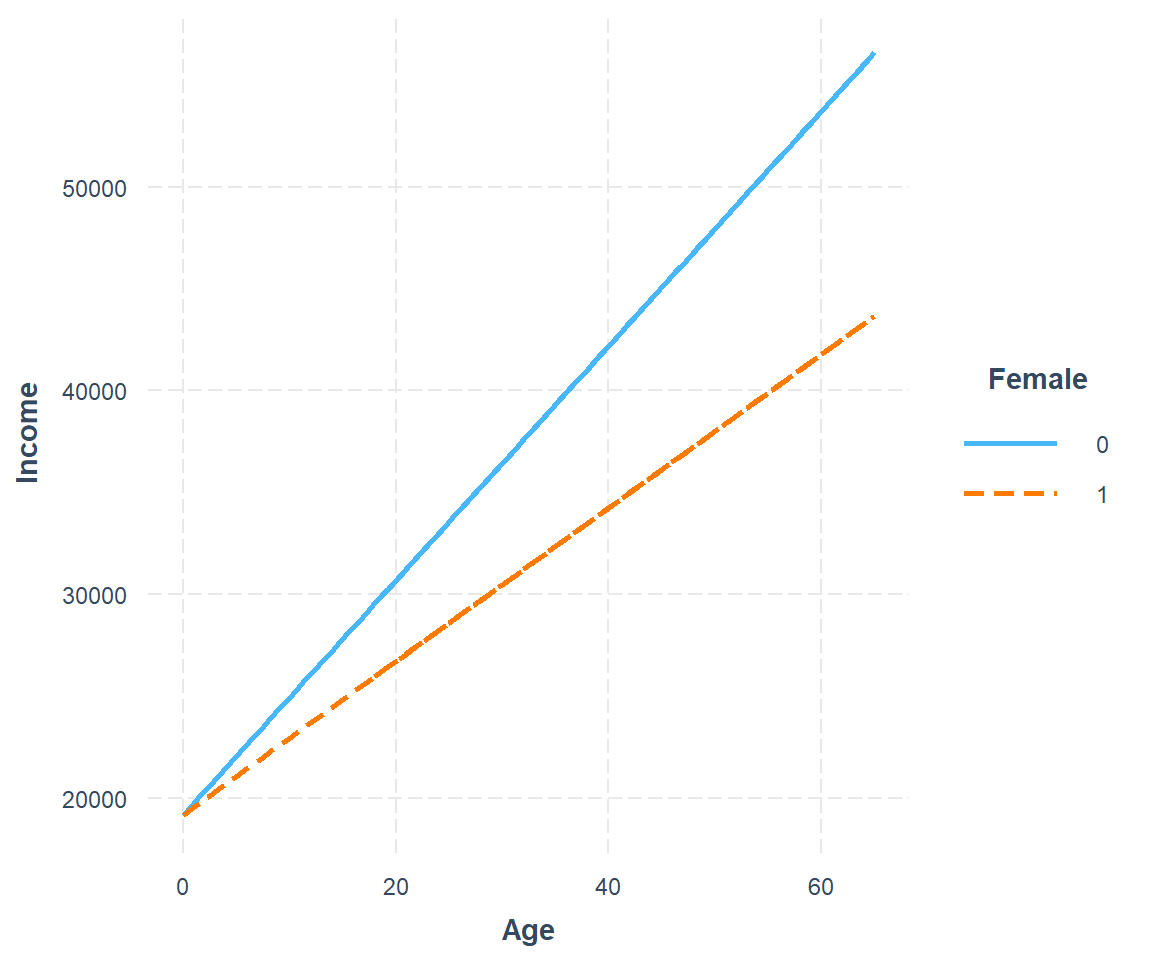

There are two more rather uncommon combination in which one of the main effects is dropped. Graphically, the result is two lines with same intercept and different slopes.

interact3b <- lm(Income ~ Age + Age:Female, data=soep)

interact_plot(interact3b, pred = Age, modx = Female) +

scale_x_continuous(limits = c(0, 65)) +

geom_smooth(method = "lm", se = FALSE, fullrange = TRUE)

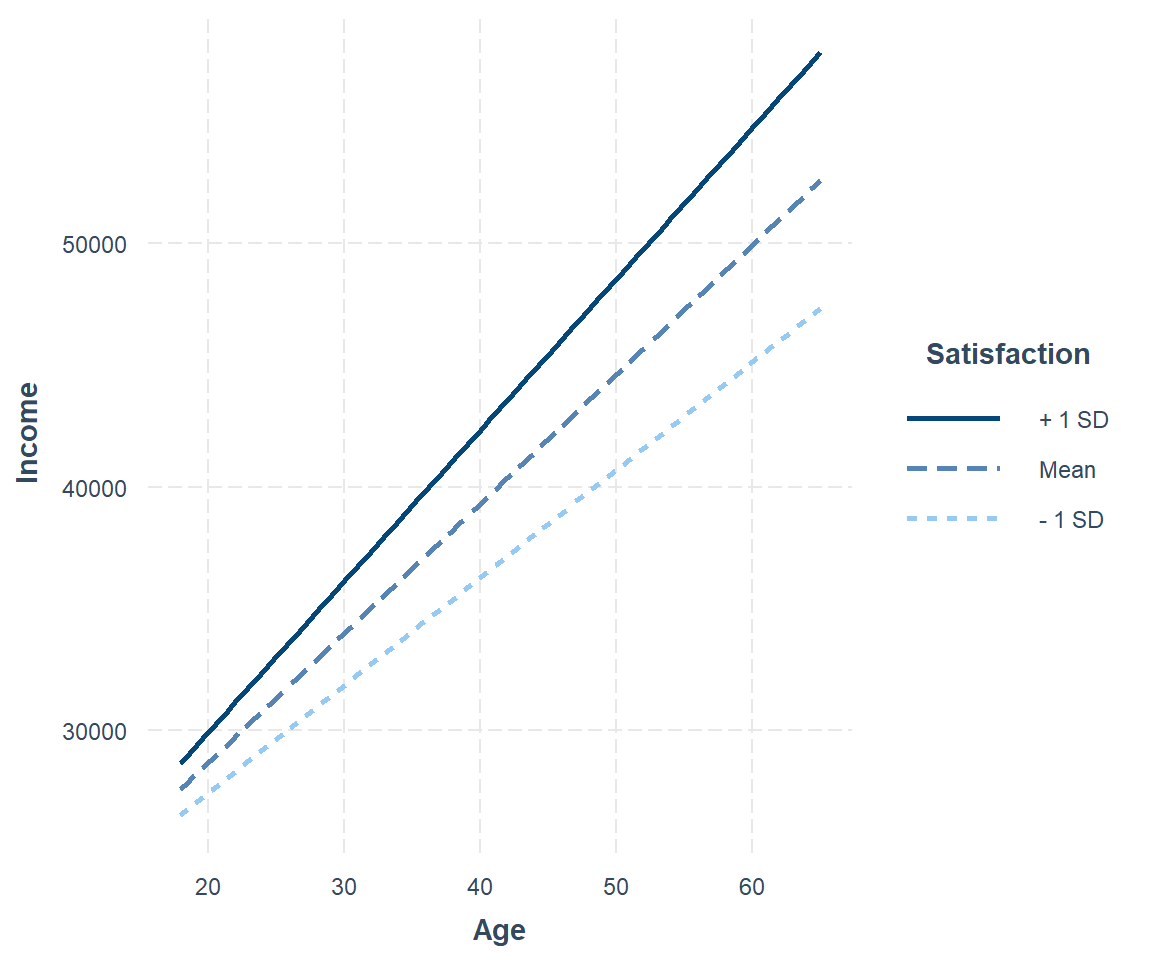

12.4.4 Continuous * Continuous

interact4 <- lm(Income ~ Age*Satisfaction, data=soep)

interact4

#>

#> Call:

#> lm(formula = Income ~ Age * Satisfaction, data = soep)

#>

#> Coefficients:

#> (Intercept) Age Satisfaction Age:Satisfaction

#> 20923.43 59.48 -378.77 62.08The interaction of two continuous variables is harder to interpret. There are conventions to help you choose the best values of the continuous moderator for plotting predicted values. But these conventions don't always work in every situation. For example, one convention suggested by Cohen and Cohen and popularized by Aiken and West is to use three values of the moderator: the mean, the value one standard deviation above, and the value one standard deviation below the mean. This is what interact_plot() does by default.

This shows you that interaction between two continuous variables works basically the same way as for a categorical and continuous variable. An interaction says that there's not a fixed offset: you need to consider both values of x1 and x2 simultaneously in order to predict y.

You can see that even with just two continuous variables, coming up with good visualizations are hard. But that's reasonable: you shouldn't expect it will be easy to understand how three or more variables simultaneously interact! But again, we're saved a little because we're using models for exploration, and you can gradually build up your model over time. The model doesn't have to be perfect, it just has to help you reveal a little more about your data.

12.5 Model Comparison

How to decide if the model with interaction is better than the model without interaction?

library(gt)

multiple3 <- lm(Income ~ Age + Female, data=soep)

multiple4 <- lm(Income ~ Age + Satisfaction, data=soep)

modelsummary(title = 'Comparison',

list("Income" = multiple3,

"Income" = interact3,

"Income" = multiple4,

"Income" = interact4),

gof_omit = 'R2|AIC|BIC|RMSE|Log.Lik.',

output = "gt") %>%

# column labels

tab_spanner(label = 'Interaction', columns = c(3,5)) | Income |

Interaction

|

Income | ||

|---|---|---|---|---|

| Income | Income | |||

| (Intercept) | 22487.063 | 17367.198 | 20923.425 | -230.558 |

| (2764.643) | (3438.645) | (13829.780) | (4385.352) | |

| Age | 501.348 | 612.892 | 59.480 | 527.982 |

| (57.643) | (72.860) | (296.198) | (57.826) | |

| Female1 | -8670.282 | 4649.196 | ||

| (1345.244) | (5506.496) | |||

| Age × Female1 | -296.045 | |||

| (118.698) | ||||

| Satisfaction | -378.773 | 2423.054 | ||

| (1794.752) | (450.609) | |||

| Age × Satisfaction | 62.082 | |||

| (38.496) | ||||

| Num.Obs. | 1211 | 1211 | 1211 | 1211 |